Send low priced SMS to your customers now

The UKs leading SMS provider, supporting businesses of all sizes. Improve your customer experience, engagement and retention from just 4.3p per SMS.

Trusted by 35,000 customers, who send over 3 billion SMS messages a year including:

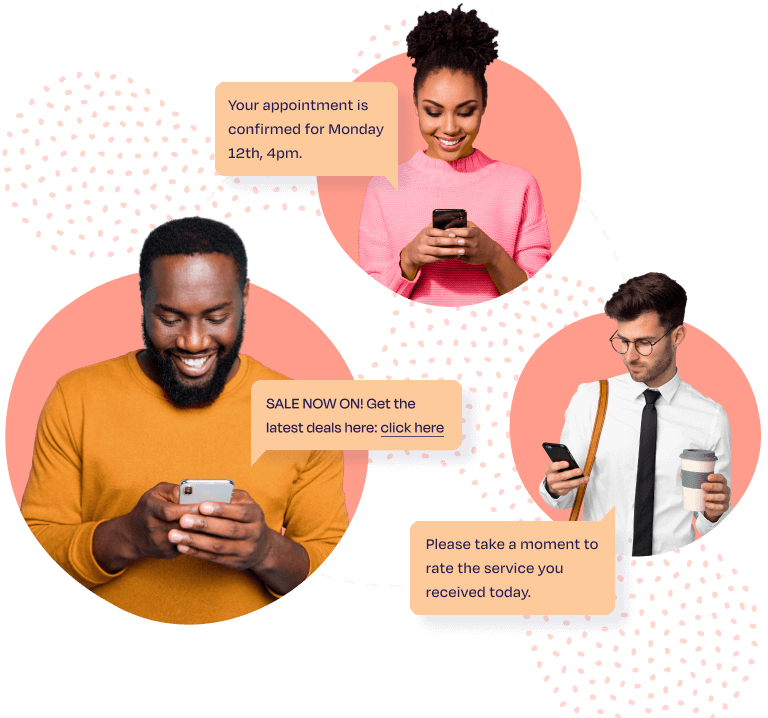

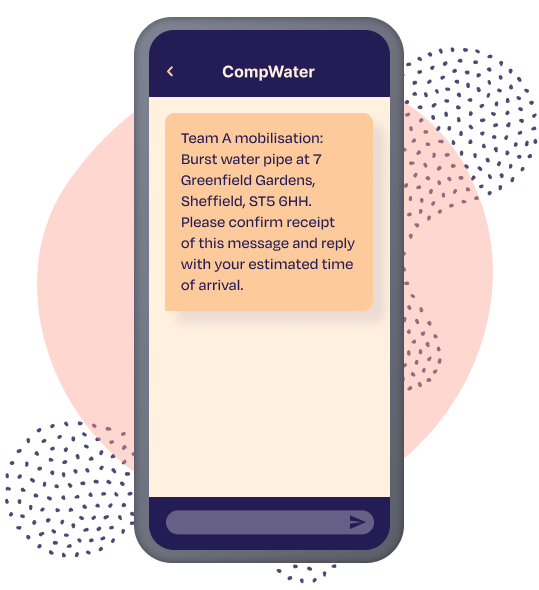

Bulk Messaging / Marketing / Text Alerts / Emergency SMS

Find out why we’re the UKs leading SMS provider

- Secure and easy-to-use SMS Platform.

- Send messages online, via email or by integrating with your own application for free.

- Improve your customer experience, engagement and retention by SMS.

- Instantly send individual or bulk SMS messages

- Perfect for marketing campaigns and transactional messages.

Reliability & security

Unbeatable security and

outstanding support

We love collecting badges! You’ll be safe with us!

ISO

27001

Certified

3.5

Billion

Messages Sent Per Year

21

Years

In Service

Get started online now

Start a FREE trial today

Take advantage of our years of expert SMS advice to add value, boost engagement and improve your ROI from your SMS campaigns.

- 20 free credits

- Full platform access

- No commitment or credit card required

- Messaging expert support

- 97% open rate

- Price match guarantee